Photo · Ilya Semenov / Unsplash

Photo · Ilya Semenov / Unsplash

You now know the chef has switches and tiers of pockets. But what does an "instruction" actually look like? When the chef reaches into RAM and reads "the next thing to do", what arrives?

A number. That's it. The instruction is a number.

And the chef has, taped to the wall, a card catalogue. Each entry says: "if you see this number, do that." The catalogue is small — about a hundred entries. Some say "add". Some say "copy". Some say "if these two are equal, jump to that other place". Almost nothing else. Every video game, every browser, every neural network you have ever run is woven from this hundred-word vocabulary, executed a few billion times a second.

An instruction is a number

Suppose we want the chef to add 5 to the contents of register 3. On a typical CPU, that becomes a 32-bit pattern, something like:

Three columns of representation, the same fact. The CPU only ever sees the binary. Hex is for humans counting bits. Assembly is for humans doing real work. Programming languages — C, Swift, Python, Rust — are for humans not wanting to think about bits at all. But the bottom is always those four bytes. The chef reads them and understands: opcode says ADD; first source is register 3; second source is the literal number 5; destination is register 3.

The whole instruction set — the catalogue — is called the ISA: Instruction Set Architecture. It's the contract between the chef and everyone above. As long as both sides honour the contract, the chef can be replaced underneath without anyone noticing. Apple did this once: every Mac sold from 2020 onward speaks ARM64 instead of x86. Most apps didn't notice.

The fetch–decode–execute cycle

The chef's life is a single, tiny loop, repeated three to five billion times a second:

Three steps, on repeat, forever. The chef has a single special register called the program counter — it always holds the address of the next instruction. Fetch reads from that address. Decode looks up what to do. Execute does it, and increments the program counter. Around again.

A modern CPU does several of these at once, in a pipeline — fetching the next instruction while still decoding the current one and executing the previous. It's a kitchen line: one chef plating last order's dessert while another preps tomorrow's mirepoix. Same idea. Different wave of the loop.

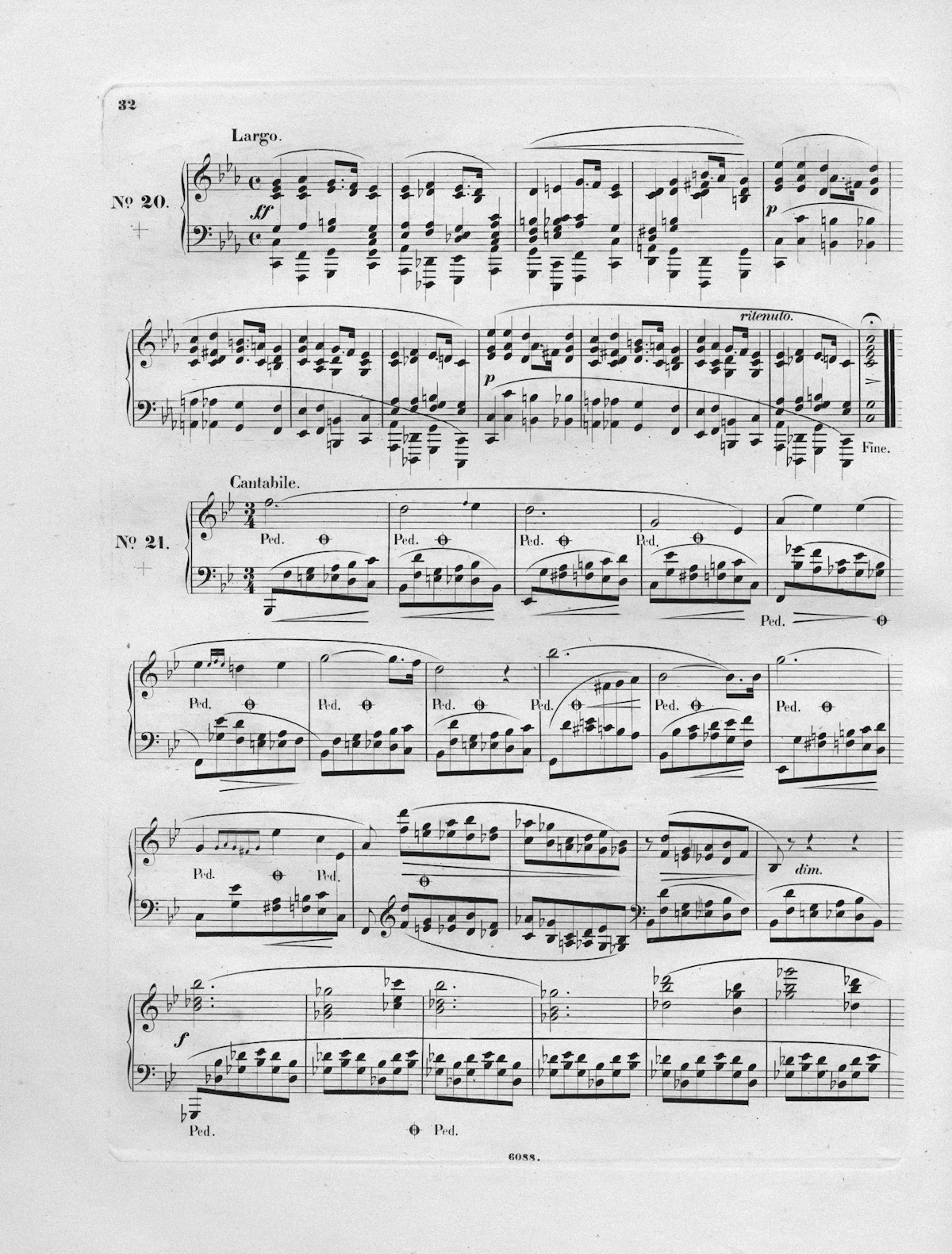

Photo · Europeana / Unsplash

Photo · Europeana / Unsplash

Machine code is sheet music. A long stream of small, ordered instructions, played one after another at unimaginable speed.

The vocabulary — five families

Every instruction in every modern CPU lives in one of five buckets. That's it. Phase 7's neural networks, last week's video game, this week's banking app — the verbs they use boil down to these:

Data movement

MOV · LDR · STR

Copy bytes between registers, between RAM and registers, between caches. About 30% of all instructions.

Arithmetic

ADD · SUB · MUL · DIV

The math the CPU is good at. Integer arithmetic in one cycle. Floating point in three or four. Division in twenty.

Bitwise logic

AND · OR · XOR · SHL · SHR

Manipulating individual bits. Shifting them left and right. The plumbing of every cryptographic routine.

Comparison

CMP · TEST

Set hidden "flag" bits based on whether two values are equal, or one is bigger, or the result was zero. Used by jumps.

Control flow

JMP · BEQ · CALL · RET

Change the program counter. Conditionally or unconditionally. The atom of every if, while, for, and function call.

Yes, there are SIMD instructions for parallel math (Phase 7 calls these into duty for AI), and various exotic ones for atomics and memory barriers. But the spine of every program ever written is those five families.

An entire program in seven instructions

"Add up the numbers from 1 to 100, then stop." The C version is four lines. The chef's version is seven assembly instructions. Read it slowly — it is exactly what the chef does:

Only seven moves. Two MOVs (data movement). Two ADDs (arithmetic). One CMP (comparison). One conditional jump (control flow). One return. Every program you have ever run is just an arrangement of these — repeated until something interesting happens.

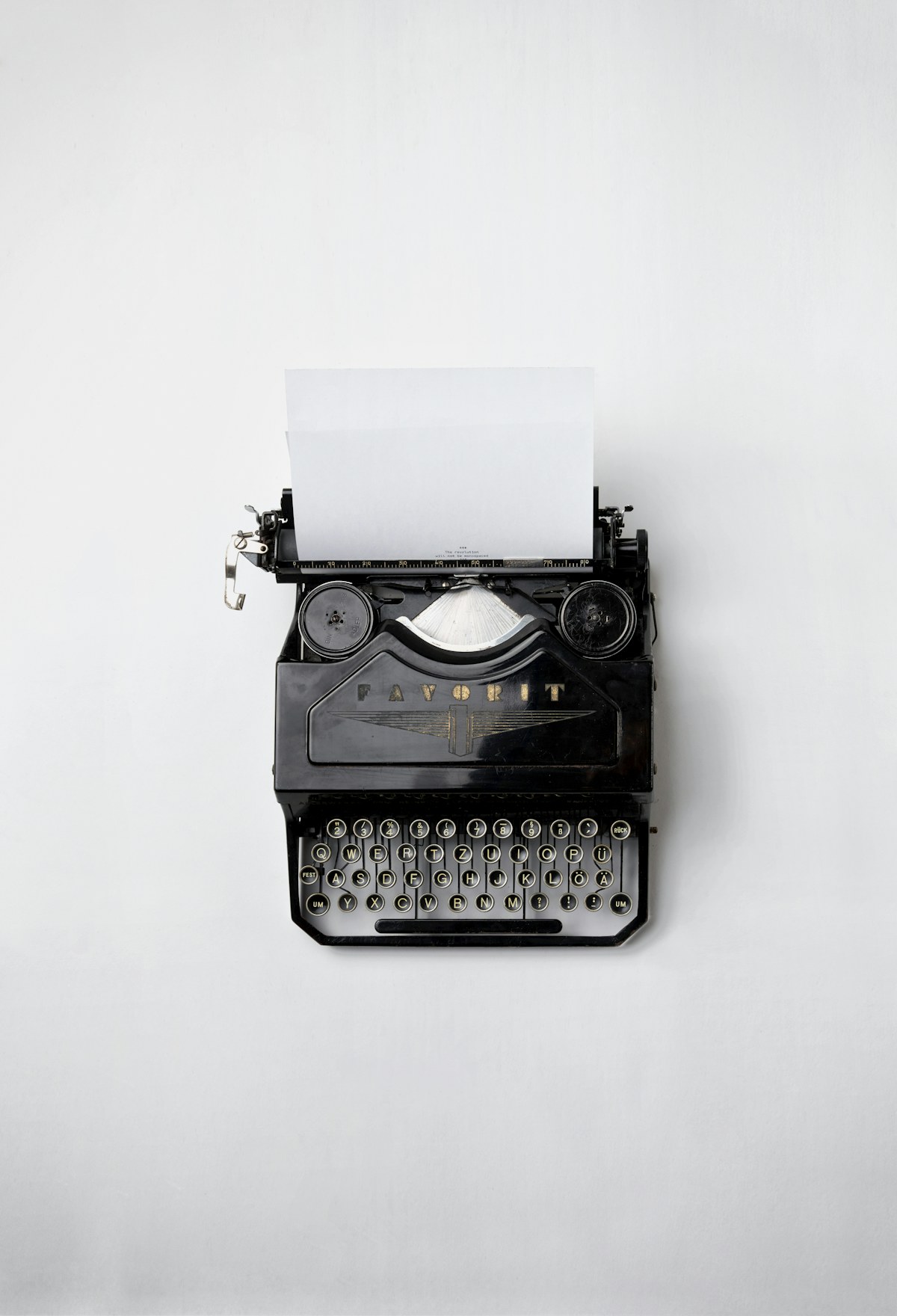

Photo · Florian Klauer / Unsplash

Photo · Florian Klauer / Unsplash

A program is a long, ordered list of small, deliberate instructions. We invented the typewriter to do this for prose. The CPU does it for verbs of arithmetic.

RISC vs CISC, briefly

Two schools of thought about how big the catalogue should be. CISC (x86, Intel/AMD) — many hundreds of instructions, some doing complicated multi-step things in one go. RISC (ARM, RISC-V, Apple Silicon) — a smaller, simpler, very regular catalogue, where every instruction does about the same amount of work.

In the 1980s the argument was bitter. Today the answer is essentially: simple wins on the inside; complex is fine on the outside. Modern x86 chips internally translate their CISC instructions into smaller RISC-like operations before the chef sees them. The phone in your pocket is RISC straight through. The cloud GPU training the next big model is RISC inside. RISC won, and CISC chips quietly turned themselves into RISC-with-a-translator.

Why this matters for AI

Modern AI workloads do, mostly, fused multiply-add on huge arrays of numbers — what GPUs and NPUs are built for. The chips that train language models have specialized instructions that take two big matrix tiles and produce a third in a single op. Those instructions weren't in the 1970s catalogue. They were added because someone realised: if we make the chef's vocabulary include "multiply this whole tile by that whole tile", we save a billion fetch-decode-execute cycles.

Adding to the catalogue is how an industry pivots. The instruction set is not a museum piece. It's a living constitution.

The chef knows about a hundred verbs. Everything is built from that.

Try it yourself

Watch the librarian work in real time:

- Open godbolt.org in another tab. Paste a tiny C function (e.g.

int add(int a, int b) { return a + b; }). Pick a compiler. Watch the right-hand pane: that is what the chef actually executes. - From a terminal: write

add.c, rungcc -S -O2 add.c. Openadd.s. Same view, locally. - Try changing

+to*. Try wrapping the body in aforloop. Watch how the assembly stretches and folds. After a while, you start to see the chef.

What's next

You now know what the chef does, byte by byte. But the chef does not do it alone. There are dozens of chefs in your machine, and tens of thousands of orders in flight at any moment. Who decides which order each chef works on? Who keeps them from elbowing each other?

Week 05 is The Traffic Cop — what an operating system actually does, and why "process scheduling" is the most under-appreciated invention in computing.

Photo credits

All photos are free under the Unsplash license. Card catalog · Ilya Semenov · Sheet music · Europeana · Typewriter · Florian Klauer. The fetch-decode-execute diagram is inline SVG.