Photo · Johannes Plenio / Unsplash

Photo · Johannes Plenio / Unsplash

The chef from last week reads instructions. But what is an instruction? What is the chef actually looking at?

Strip away every layer — the operating system, the assembly code, the machine language — and you arrive at one of the strangest, most beautiful facts in engineering. The chef reads switches. Tiny ones. Trillions of them. Each one is on, or off. That is all.

Not metaphorically. Literally. There is no other content. Your phone, this webpage, every photo you have ever taken, every model GPT-5 ever ran — every bit of it is, at the bottom, a pattern of switches. And those switches are flipped by light.

A switch is the smallest possible decision

Hold a light switch in your hand. It can be in two states: up or down. On or off. 1 or 0. True or false. Yes or no.

That single binary choice is the atom of all computation. We call it a bit — short for "binary digit". One bit is the smallest amount of information that can exist. You can't divide it further; "half a yes" isn't a coherent idea. Computer science is built on the discovery that every question — no matter how complex — can be reduced to a sequence of yes/no answers, given enough of them.

Four switches, sixteen possible patterns, sixteen possible values. Eight switches gets you 256 patterns — enough to encode every letter, digit, and punctuation mark in English. We named that group a byte. Almost everything in modern computing is built in bytes.

A transistor is a switch made of light

The first computers, in the 1940s, used physical switches — relays that clattered, vacuum tubes that glowed, and burned out, and got replaced by exhausted operators with bandages on their fingers.

Photo · Egor Komarov / Unsplash

Photo · Egor Komarov / Unsplash

A vacuum tube — the original electronic switch. Each one the size of a thumb. Today's chips have seven trillion switches in roughly the same volume.

Then in 1947, three engineers at Bell Labs — John Bardeen, Walter Brattain, and William Shockley — figured out how to make a switch from a sliver of silicon and a pinch of impurities. They called it the transistor. It had no moving parts, used barely any power, and could flip on and off billions of times a second. The Nobel Prize followed within a decade.

The mechanism is almost embarrassing in its simplicity. A transistor has three terminals: source, gate, and drain. When the gate has voltage on it, a microscopic channel opens between source and drain — and current flows. No voltage on the gate, no channel, no current. That's it. That's the whole device. Voltage in, voltage out, controlled by another voltage.

And here's where the "light" comes in. We don't build transistors any more — we don't lay them down individually with tweezers. We print them, in patterns of ultraviolet light shone through a stencil onto a polished disc of silicon. The process is called photolithography. The transistors in the phone in your hand were drawn with light beams a few atoms wide. They are, almost literally, sketches in silicon, made of light.

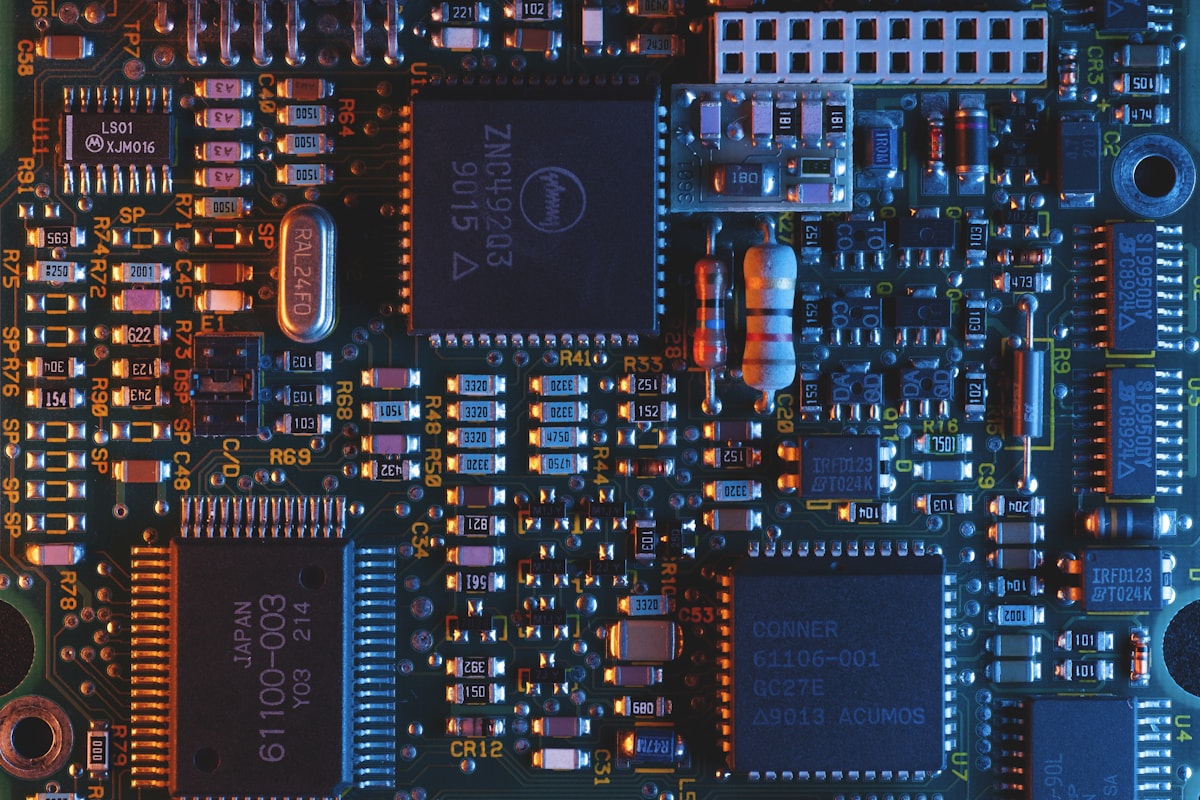

Photo · Umberto / Unsplash

Photo · Umberto / Unsplash

The traces you can see with the naked eye are highways. The actual transistors are far below the resolution of your eye — drawn with extreme-ultraviolet light at 13.5 nanometres.

Binary — counting with switches

So the chef reads switches. But how do switches mean numbers? The trick is positional — the same trick we use with our familiar decimal digits.

In decimal, the rightmost column is "ones", the next is "tens", the next "hundreds". Each column is 10× the one before. 247 means 2×100 + 4×10 + 7×1.

Binary is exactly the same idea, but with only two digits. The columns go: ones, twos, fours, eights, sixteens. Each column is 2× the one before. 1011 means 1×8 + 0×4 + 1×2 + 1×1 = 11.

Eight switches gets you 256 different patterns. We chose to name a few of those patterns "letter A", "letter B", "the digit 7", "an exclamation mark". The naming is called ASCII (and its modern multi-byte cousin UTF-8). Sixteen switches gets you 65,536 patterns — enough for every Chinese character. Sixty-four switches gets you 18 quintillion. Beyond that, the universe runs out of interesting things to count before the switches do.

This is why everything — every photo, every emoji, every audio sample, every word in this sentence — can be stored in computers. Computers don't store things. They store patterns of bits. The pattern means a thing only because we agreed, decades ago, on which patterns mean what.

From one transistor to two hundred billion

The first transistor, 1947, was built by hand and was the size of a fingertip. Within ten years engineers learned to put two on a single piece of silicon. Then four. Then sixteen. Then a hundred. Moore's Law — the empirical observation that the number doubles every two years — has held, with some fits and starts, for sixty years.

Two hundred billion transistors. One chip. The numbers stop feeling real after a while, so try this: there are roughly 86 billion neurons in your brain. A modern AI chip has more switches than your head has neurons. Whether the analogy is useful is a question for Phase 7. But the raw scale is no longer disputed.

Try it yourself

Hold up your right hand, palm facing you. You have five fingers — five switches. Each finger up = 1, finger down = 0. Read them right-to-left as binary digits worth 1, 2, 4, 8, 16.

- All fingers down: 0

- Just thumb up: 1

- Just index up: 2

- Thumb + index: 3

- Just middle finger up: 4 (yes — that's where this gesture lives in binary)

- All five up: 31

You can now count to thirty-one on one hand. Two hands gets you to 1023. This is exactly what your CPU is doing — just with seven trillion fingers, made of silicon, flipping a billion times a second.

Why this matters for AI

Every neural network — every ChatGPT response, every Stable Diffusion image, every Tesla lane-change — boils down to a torrent of multiplications and additions. Lots of them. Modern language models do tens of trillions of multiplications per token they generate.

And every one of those multiplications is, at the bottom, switches flipping. Two binary numbers go in. The transistors gate, drain, gate, drain — billions of times — and a third binary number falls out. The transistor is the smallest unit. Everything else is scaffolding.

When people say "AI runs on silicon", they mean it. AI is, ultimately, a particularly clever way to flip switches.

A bit isn't a metaphor. It's a voltage on a wire. Everything else is what we agreed it means.

What's next

You now know what the chef is reading: voltages on wires, ones and zeros, switches in patterns. But the chef does not run back to the pantry between every instruction — the kitchen has tiers. Pockets, drawers, a sideboard, the counter, the pantry. Each one bigger and slower than the last.

Week 03 is The Pocket vs. The Warehouse — CPU caches and the latency hierarchy. Why a 100× speedup, in practice, often comes from where the data is, not how the algorithm works.

Photo credits

All photos are free under the Unsplash license. Lightbulb · Johannes Plenio · Vacuum tube · Egor Komarov · Microchip · Umberto. Switches and transistor diagrams are inline SVG / CSS.